Enterprise AI projects often follow a familiar pattern. Systems that perform well in pilots can struggle once they enter real-world environments. The problem usually lies not in the technology itself, but in the processes around it. Pilots are designed to succeed using clean data and controlled conditions. Once the system moves into production, it must handle messy inputs, conflicting instructions, and unexpected situations. Performance improves when teams clarify ownership, establish clear escalation paths, and maintain consistent oversight.

Vijayan Seenisamy, Group Transformation Lead at Woolworths Group, one of Australia's largest retail and grocery companies, has spent his career helping organizations close the gap between pilot and production through his AI ROF™ framework, which provides a structured approach for deploying and managing AI systems with clear ownership, accountability, and measurable outcomes. He has also led large-scale agile and digital transformations at major Australian organizations, including Bupa and Telstra, and is the author of The AI Delivery Manager Blueprint.

Seenisamy notes that many organizations still apply traditional, deterministic software practices to probabilistic AI systems. "The failure is not the model. It is the absence of everything around the model," he says. He describes a predictable pattern in which a team builds an agent, the pilot succeeds under controlled conditions, leadership reports a win, and the team moves on. The agent then operates without governance, its outputs degrade, and no one notices until the impact becomes visible. He calls this the "Demo God Curse," wherein pilot projects are designed to succeed under conditions that production will inevitably expose.

Pilot paradise, production problems: In a pilot, the agent works with clean data, a narrow scope, and a team monitoring every output. In production, it encounters ambiguous inputs, conflicting instructions, and edge cases no one planned for. "The pilot was a controlled experiment. Production is the real world, and nobody planned for the difference," Seenisamy observes.

Ship, drift, repeat: Treating agents like conventional "ship and forget" software releases does not work for systems that continue to evolve. Unlike traditional software, an agent’s behavior changes after go-live. Without ongoing oversight, small drifts can grow into customer issues or cost overruns. "Agents are not deterministic. They make different judgment calls on different days with different data. That requires a different kind of management, and most organizations have not built it yet," says Seenisamy.

Many governance frameworks are evolving to close the gap. The growing demand for agent observability shows that companies are recognizing the value of building both deployment and management capabilities. By developing strong oversight alongside deployment, organizations can maximize the impact and reliability of their AI agents.

Ask smarter, not harder: Successful AI deployments start with the right foundation. "Many leadership teams start in the wrong place. They ask about which tasks to automate, which is a technology question disguised as a strategy question," Seenisamy says. Focusing on ownership before cataloging an agent’s capabilities lays the foundation for sustainable deployments. Teams that start with accountability can build a growing portfolio of agents while maintaining clear responsibility and consistent results.

Ownership first, success follows: Strong AI deployments start with clear accountability. "Leadership teams tend to ask about which tasks to automate, which is a technology question disguised as a strategy question," Seenisamy says. Starting with ownership ensures sustainable deployments that help teams turn pilot success into real-world impact. The important first step is deciding who will own the agent after it goes live, not who built it or sponsored the pilot. "It's about who is accountable when it drifts, when its outputs degrade, and when the cost of running it exceeds the value it produces," he notes. Addressing this requires building new processes rather than just deploying capable technology.

Laying the groundwork: Effective AI work begins with the operating model surrounding the technology, not the technology itself. "Until that operating model exists, telling teams to just use AI is an instruction without infrastructure behind it," Seenisamy shares. This gap extends beyond agents; any AI capability deployed without a supporting operating model carries similar risk. Agents reveal gaps quickly because they act rather than recommend. These unmanaged actions can compound quickly at scale.

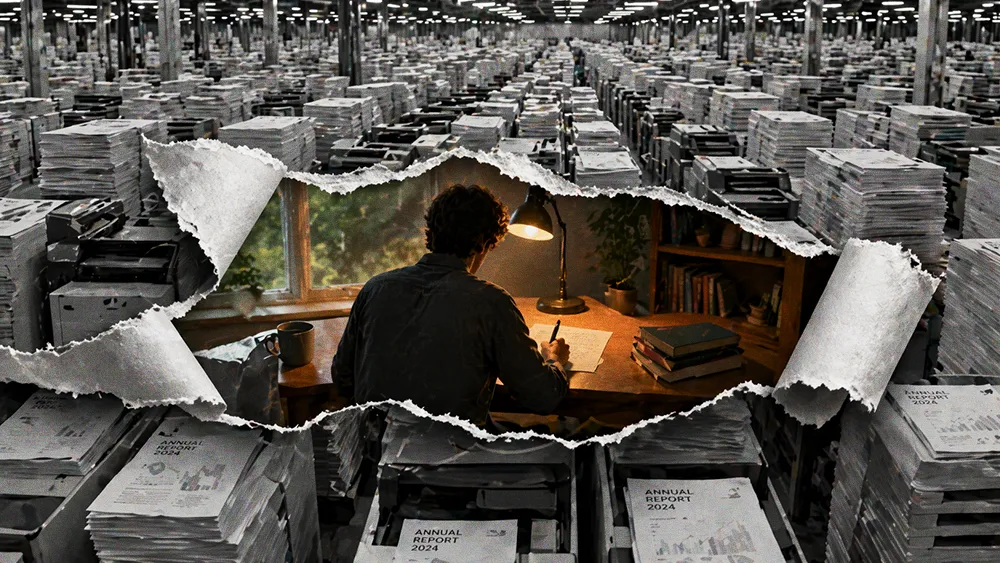

Without a clear operating model, organizations struggle to measure the true value of their AI investments, which can lead to hidden costs compounding over time.

Counting what counts: Sometimes automating a task can cost more than doing it manually. "That is negative unit economics," Seenisamy says. "The task got automated. The cost went up. And nobody noticed because the metric they tracked was volume, not value." Many automated workflows still rely on human review, error correction, and rework when clients spot mistakes, yet none of that appears on dashboards reporting efficiency gains. "The result is a portfolio that looks productive but costs more than it should."

The one question that matters: Before delegating any task, successful organizations must start by asking a specific question. "Is this task cheaper, faster, and more reliable with the agent, or are we just doing it because we can?" Seenisamy says. Applying this test consistently before deployment helps build portfolios with real cost efficiency rather than accumulating hidden AI debt. "Most organizations skip this step because the answer is harder to measure than a simple volume metric, and leadership rarely asks for it."

From data to dollars: Measurement drives which agents get expanded, which get cut, and whether leadership sees the true cost of AI activity. "Some agents are generating more content, more emails, more data, and none of it is attached to a business result. That is not velocity, but is rather digital debt with a good dashboard," Seenisamy says. Work overload from AI is often treated as a prioritization problem, but he views it as a measurement issue. Better prioritization cannot fix a system that does not track the right metrics. Platforms with built-in observability create the infrastructure to analyze outcomes at scale.

Until ownership is clearly defined, escalation paths are established, and outcomes are measured at the unit level, Seenisamy believes any agent deployment remains incomplete, no matter how capable the model. Pilot projects may appear successful, but in production, the gaps pilots were designed to hide become visible. The challenge is not the AI itself, but the processes, accountability, and measurement infrastructure around it. Organizations that build observability into every layer, define clear ownership, and track the true value of automation can turn pilot promise into sustained production performance, unlocking efficiency, reducing digital debt, and avoiding negative unit economics. "The guardrail is not about restricting what AI does," Seenisamy concludes. "It is about making what it does visible at the outcome level."