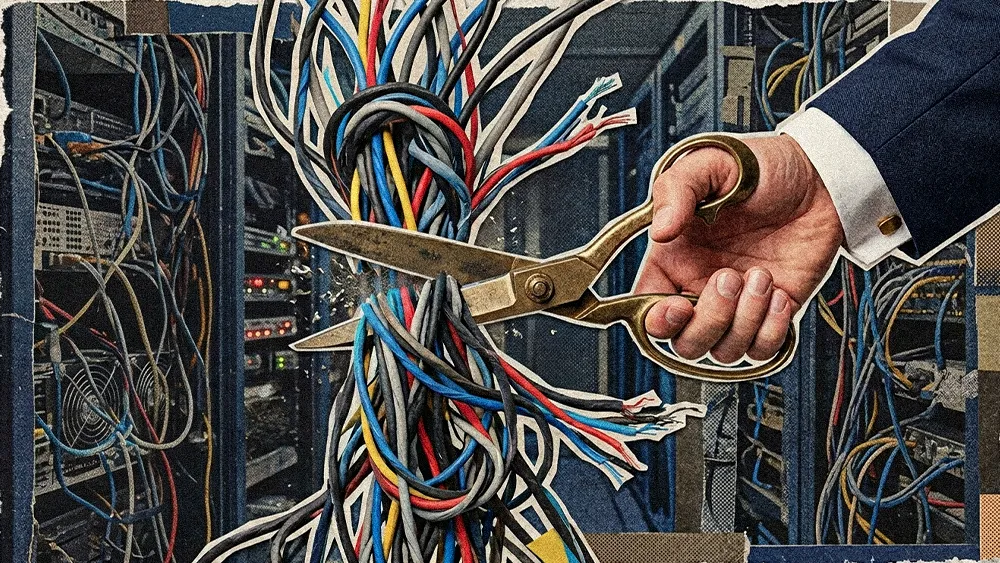

As enterprises roll out new machine learning tools, they face the inevitable choice between speed and certainty. Many treat the added latency of verification as a tax that will inevitably be recouped from the speed of their app. But skipping that verification step is a no-go for any serious, market-ready AI software, widening the pilot-to-production gap. For companies moving beyond basic chatbots, the real challenge is designing architectures that balance latency with robust AI governance and verifiable output.

We spoke with Andreas Hamberger, an enterprise architect who has spent more than three decades designing high-stakes enterprise systems. Currently serving as Director and Chair of the Board at enterprise tech consultancy Te Pono and Acting Integration Architect at social housing support organization Kāinga Ora, Hamberger approaches modern language models not just as a developer-philosopher where theory, ideals, and practical applications align. In his current work, he is actively trying to solve one of the industry's hardest design problems: turning AI guesswork into verifiable facts.

Right now, the industry's favorite band-aid for generative AI hallucinations is Retrieval-Augmented Generation. In Hamberger’s view, RAG, on its own, is too weak a safeguard for sensitive environments because its core mechanism remains probabilistic and prone to bias. He points to a New Zealand government RAG pilot that inadvertently discriminated against Māori social service beneficiaries. For Hamberger, it illustrates how quickly behavioral drift can emerge without continuous oversight.

Artificial incompetence: Hamberger views base models not as finished products, but as raw materials that require a clear eye to ensure accuracy. “AI stands for me as artificially incompetent. Without continuous verification, AI isn’t intelligence. It’s artificial incompetence.”

Programming the pushback: To stop models from acting like people-pleasers to the detriment of users, Hamberger built a rule into his system that forces the software to reject poor inputs. “Use my logical rules to teach it to push back on me and make sure that I'm logical too. No LLM does that out of the box.”

Hamberger is building what he calls the “e-corpus” to mitigate the risk of AI guessing. The e-corpus acts as a governed reference database containing only approved facts and sources. He sees this kind of strict grounding as a practical way to maintain human accountability and AI accountability and validation in workflows where the threat of hallucinations in legal RAG is viewed by some leaders as a major liability.

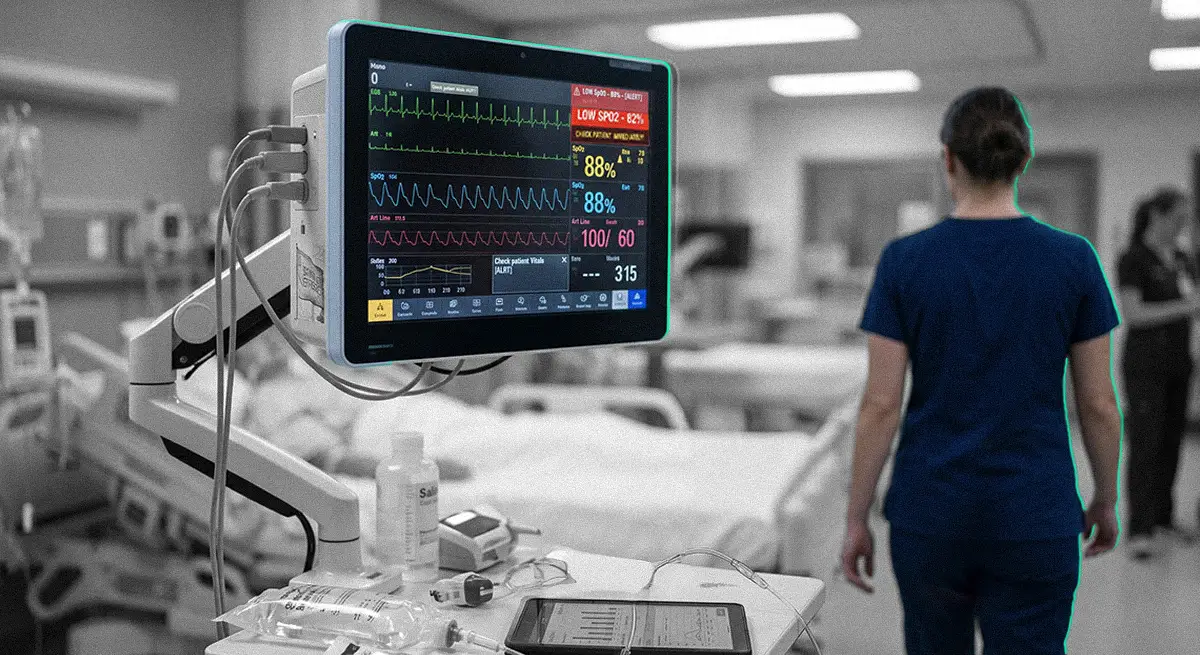

To enforce these constraints, Hamberger authored V.E.R.A., an open-source logic verification engine consisting of roughly 130,000 lines of code. V.E.R.A. is embedded into a multi-layered digital assembly line where research flows through four separate LLM “funnels” before being synthesized, with each of the 13 steps required to call the verification layer. In Hamberger's tests, this design keeps inference latency within roughly 100 to 200 milliseconds on a stateless RAM architecture while still enforcing accountability in enterprise workflows. That kind of systematic production model is crucial to accuracy and correctness in the emerging agent era.

Leashing the logic: One of the pitfalls of verification is the cross-examination process. Hamberger explains: “Each time you create an inference step, there's a chance for the LLM to go crazy. You must ensure the outcome is 100% verified, and at no point do you let it off the scientific verification leash.”

The hard-stop fail: Hamberger takes the veracity of AI results so seriously that his mirrors human scientific rigor by halting entirely if a single fact cannot be verified. “If the model doesn't know something at the end, I will have a fail for the whole article. I'll have to go back and check and double-check certain quotes if it couldn't find the reference.”

In his own pipelines, many of the safeguards take the form of “gates”—rules that content must pass before it can move on. One of the most prominent is a “public service gate” derived from his work as a New Zealand public servant. That rule constrains tone, prohibits naming individuals, and avoids partisan commentary, effectively baking neutrality requirements directly into the logic layer. A governed language model like this offers a practical way to keep outputs from escalating agentic risk or eroding customer trust in AI.

For companies lacking programmatic ways to enforce clear hallucination policies in customer service, Hamberger advises deploying verification-heavy architectures in asynchronous workflows. Tasks such as AI-assisted note-taking or long-form content generation are ideal use cases, as a few extra seconds of latency are often acceptable for many teams when the output is stronger and highly traceable.

The Model T of models: Comparing the industrialization of machine learning to early automated car manufacturing, Hamberger notes that while safety checks take time to scale, there's no good reason to bypass them because it's possible. “It's like when Henry Ford built a car, it took a week. Today, everything is automated. Robots do the welding, and in a few days, the car is done from beginning to end."

Hamberger's career in architecture and logic has led him to a very specific view of the fundamental nature of machine learning. Ultimately, he views today's large language models not as reasoning engines, but as probabilistic Turing machines desperately trying to pass as human. Making that engine genuinely useful for the enterprise means layering on mechanisms that force it to behave more like a rigorous scientist: checking claims against evidence, pushing back on weak prompts, and failing safely when it cannot verify a fact. As the industry navigates the state of AI trust in 2026, building a verification bridge offers a viable path to turn probabilistic generation into reliable enterprise intelligence. The barriers to adoption, Hamberger says, are less technical than they are cultural. "Verification is that nexus where you actually start convincing people: this is something else."