AI & Data Horizons: 2026

Sovereign Platforms, Agent Governance, and Human-First Execution

Executive Summary

AI is everywhere, but maturity is not.

By the end of 2025, most large enterprises will have AI embedded somewhere in their stack—pilots, copilots, agents, and analytics. But beneath the surface, a small minority now captures disproportionate value. The difference is in how they treat AI and data as one system instead of a collection of tools.

Three pillars define AI leadership.

The organizations pulling ahead are doing three things at once: building sovereign, hybrid platforms they actually control; governing growing fleets of agents instead of scattering point solutions; and redesigning work so humans are amplified, not displaced. Those choices—platform, governance, and roles—are now the real strategic levers.

Operating models will meet AI reality in 2026.

The next year will reward leaders who move beyond scattered PoCs and tool-led experimentation. Winning teams will define a sovereign core, invest in “ready” data before spawning agents, stand up an agent governance stack, and design AI around people and vertical workflows. Everyone else will keep adding more AI—and wondering why so little of it reaches production or delivers meaningful return.

What Changed in 2025…

With AI, a lot of questions aren’t ‘if’ anymore—they’re ‘when.’ You can’t treat exponential trends with linear thinking and expect to win.

From cloud-first to sovereign,

hybrid-by-design.

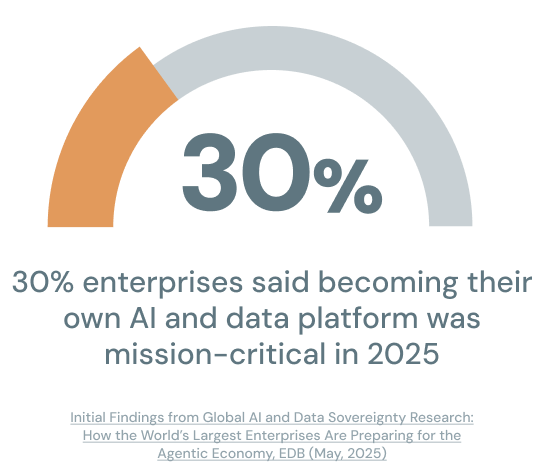

Last year marked the end of “cloud-first” and the rise of sovereign hybrid infrastructure. In 2025, 30% of large enterprises said becoming their own AI and data platform was mission-critical—and that figure is expected to reach 95% within the next three years.

Tivo's Latest Video Trends Reveals Growing Consumer Interest in Video Services Bumdles Over Fragmented Streaming Experiences, Businesswire (October, 2025)

Sovereignty used to be about data. Now it’s equally about technology–and the infrastructure itself.

Data location became a board-level concern.

Meanwhile, boards and governments stopped treating data location as a pure compliance issue and started treating it as a strategic constraint. Concerns about dependency on US hyperscalers, national security, and 10x AI power demands all pushed organizations to reconsider where and how their data and models live.

Sovereignty expanded to

hardware, edge, and cloud regions.

Traditional data centers struggled with GPU density, east–west traffic, and power. At the same time, NPU- and inference-optimized chips made it cheaper and safer to push certain workloads to the edge, keeping sensitive data on devices.

Latency, privacy, and power are where edge wins.

The data centers we built for non-AI workloads will not cut it for AI. GPUs, storage, networking, power–everything has to be rethought for training and inference at scale.

2026 Playbook for AI Data Leaders

Define your sovereign core.

1

Build a sovereign reference architecture.

Decide what must stay under your control, then tier your data, models, and workloads into sovereign-only, hybrid, and cloud-friendly. Assign each tier a default landing zone and explicit rules for movement across on-prem, private cloud, and public cloud. Publish the result as a sovereign reference architecture so every AI project starts with an approved runtime and data boundary.

Encode control and portability into standards.

Turn sovereignty into enforceable patterns, like “agent logs and prompts write to Postgres in our sovereign tenant.” Set vendor criteria that avoid lock-in at the core, including no dependency on proprietary storage formats or closed vector databases. Align investment to the architecture, including GPU/LPU capacity planning, lakehouse components, Postgres AI, and edge footprint where needed. Track sovereign share of AI workloads to confirm critical use cases are actually running where your control and compliance requirements demand.

Fix “ready data” before building more agents.

2

Reduce ambiguity with knowledge inventories and canonical data models.

If agents sit on inconsistent schemas and stale definitions, they will produce confident nonsense at scale. Inventory the 20–30 systems and repositories that hold decision-critical knowledge, including operational apps, wikis, SharePoint, and data lakes. Define a small canonical data model around shared business objects, like Customer, Product, Asset, Case, and Transaction, and assign owners and data contracts so domains cannot drift silently.

Make answers traceable with metadata, lineage, and automated governance.

Make it easy to answer “where did this answer come from?” and “who owns this field?” by treating metadata and lineage as product features, not documentation chores. Automate PII masking, retention, and quality checks through policies and pipelines so governance runs by default. Only attach RAG, copilots, or agents once a domain meets your minimum readiness bar. Track time-to-insight for key decisions to prove AI is shortening the cycle between signal and action in core workflows.

Stand up an agent governance stack.

3

Make agent ownership visible with a real registry.

Treat agents as production systems with a purpose, an accountable owner, and explicit access. Maintain an agent registry that captures each agent’s role, tools, data permissions, runtime environment, and deployment status. Block deployment paths that bypass registration so “shadow agents” stop multiplying.

Control behavior in runtime with guardrails and AI observability.

Define what agents can do, not just what they can access, including transaction thresholds, approval requirements, and logging rules that prevent PII leakage. Add AI observability so you can trace agent actions end-to-end, monitor success and failure patterns, detect drift, and manage cost. Use supervisory controls or guardian agents to flag policy violations and anomalies early. Track agent inventory health by comparing registered agents to agents discovered outside the registry, and drive the gap down over time.

Design AI around people and roles.

4

Start with decision workflows instead of “AI features.”

Map 5–10 roles that carry real operational leverage, such as CIO, CDO, product manager, risk analyst, SRE, and frontline manager. Identify the decisions each role makes weekly, the information they chase manually, and the handoffs that cause waiting. Design copilots and agents around those decision flows so AI reduces friction where work actually happens.

Put copilots inside the tools teams already use.

Embed AI in existing systems like IDEs, BI platforms, ticketing systems, and CRMs, then remove search, summarization, and coordination work from the day. Train people on when to trust, question, and override AI, and update job expectations so “AI present” becomes a normal operational context. Formalize hybrid roles, including AI product owner, agent manager, data steward, and model risk lead, so accountability does not collapse into ambiguity. Track human adoption and satisfaction to verify people keep using the tools and believe the outputs help.

Bet on vertical workflows.

5

Prove measurable lift in industry-specific processes.

Generic chat and summarization will not differentiate your program in 2026. Pick a small set of flagship workflows per business unit, such as claims adjudication, credit limit changes, preventive maintenance, patient intake, or churn saves, then define the before-and-after journey. Make it explicit where AI assists, where agents act, and where humans must approve, especially in regulated or safety-critical steps.

Run a two-quarter graduation rule for pilots.

Attach outcome metrics that the business already respects, including time-to-decision, error rate, regulatory exceptions, NPS/CSAT, upsell rate, and loss ratio. Build on your sovereign core and ready data so wins are repeatable, not one-off demos. Pause or kill pilots that cannot tie to a vertical workflow and a measurable outcome within two quarters. Track ROI-anchored AI spend to keep experimentation disciplined and to force clear decisions about which efforts become platform capabilities.

From PoC agents

to governed production fleets.

2025 was the year agentic AI advanced from isolated proofs of concept to sprawling fleets of agents—forcing organizations to confront governance as a first-order problem.

The last 18 months were about GenAI. This year is about your first agent. The next 12-18 months will be about fleets of agents working together–specialized, orchestrated, and industry-specific.

Many AI deployments stalled at PoC.

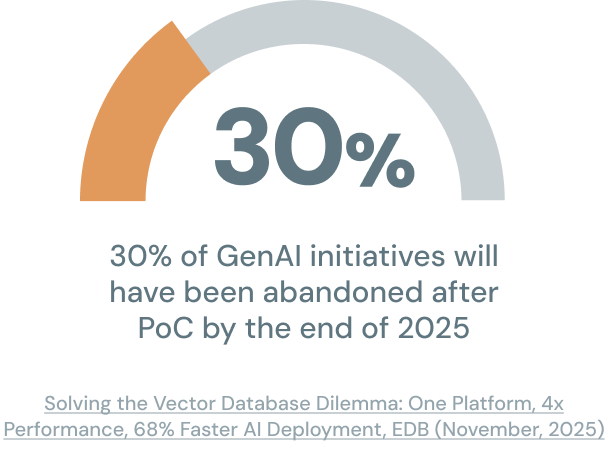

In 2024, Gartner predicted that at least 30% of generative AI projects will be abandoned after proof of concept by the end of 2025. For most, the primary cause will have been poor data quality, inadequate risk controls, escalating costs, or unclear business value.

More capable agents also meant more risk.

Most LLMs are optimized to produce code and actions that “work,” not ones that are secure or compliant. Once those models are wrapped in agents with tool access and autonomy, the attack surface expands dramatically.

If you don’t have AI observability and an AI registry, you’ll lose control of governance, cost, and intelligence. Agentic work will sprawl across the company.

AI governance became an operational reality.

Many organizations realized they needed agent registries, runtime guardrails, and cross-functional “AI Offices” to mitigate risks as a result. From 2024 to 2025, 65% of organizations reported regular use of generative AI—almost double the prior year’s rate. Yet only about 1% of organizations believe they have reached full AI maturity.

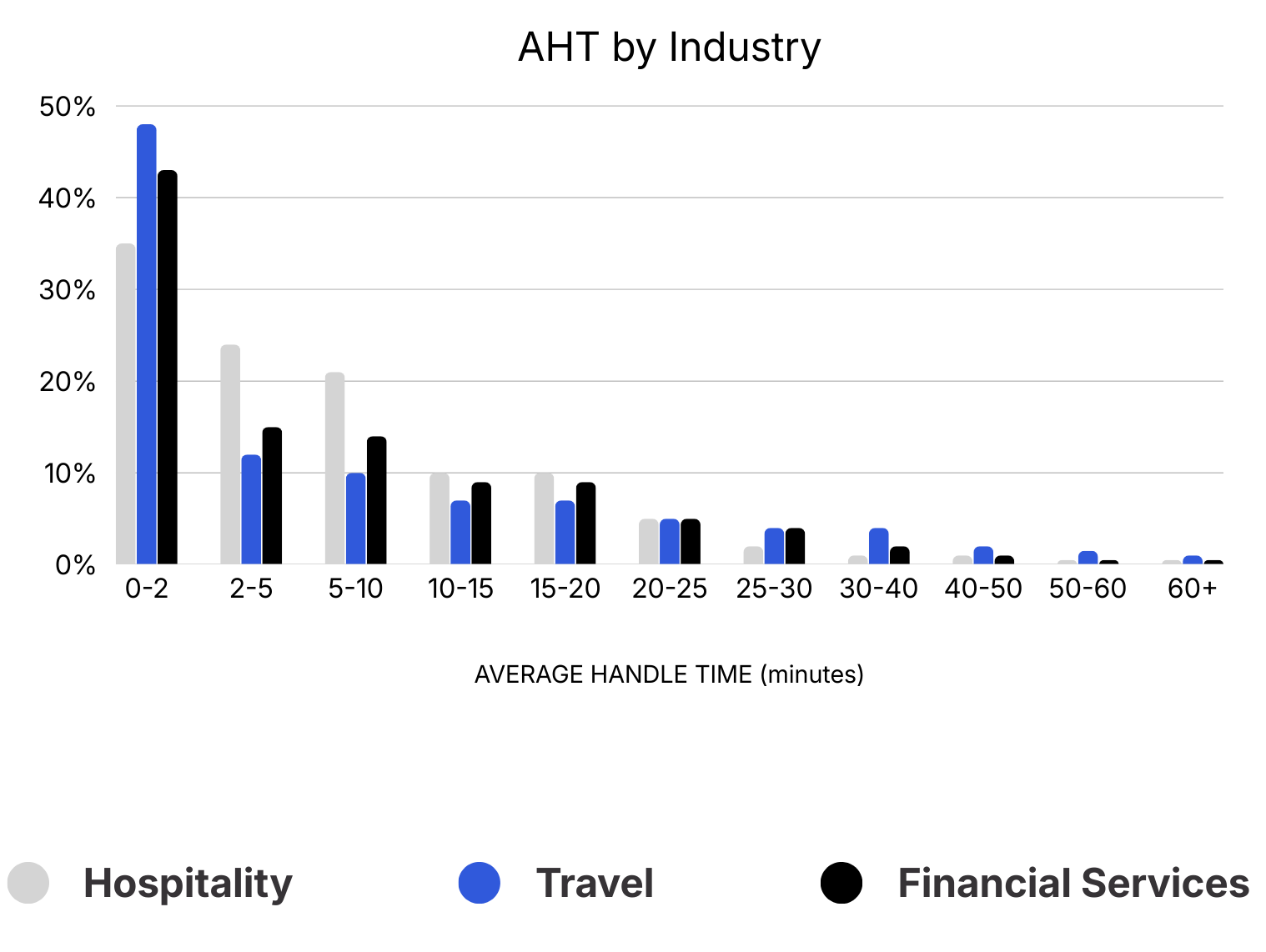

Legacy metrics don’t deliver value.

Fewer than 20% of AI-handled conversations reach successful resolution. In other words, most AI systems today assist with parts of the interaction, but still need humans to close the loop.

Why Driving Down Average Handle Time is Costing You Money, Cresta (2024).

Coaching patterns show a similar mismatch.

In most cases, increasing the number of coaching sessions does not reliably improve behavioral adherence. Because sessions aren't targeted at the specific behaviors driving outcomes, many teams spend more time in coaching meetings without seeing clear gains.

The value is still in the conversation with the customer. You need context and judgment to decide what actually matters.

Agentic AI is introducing risk.

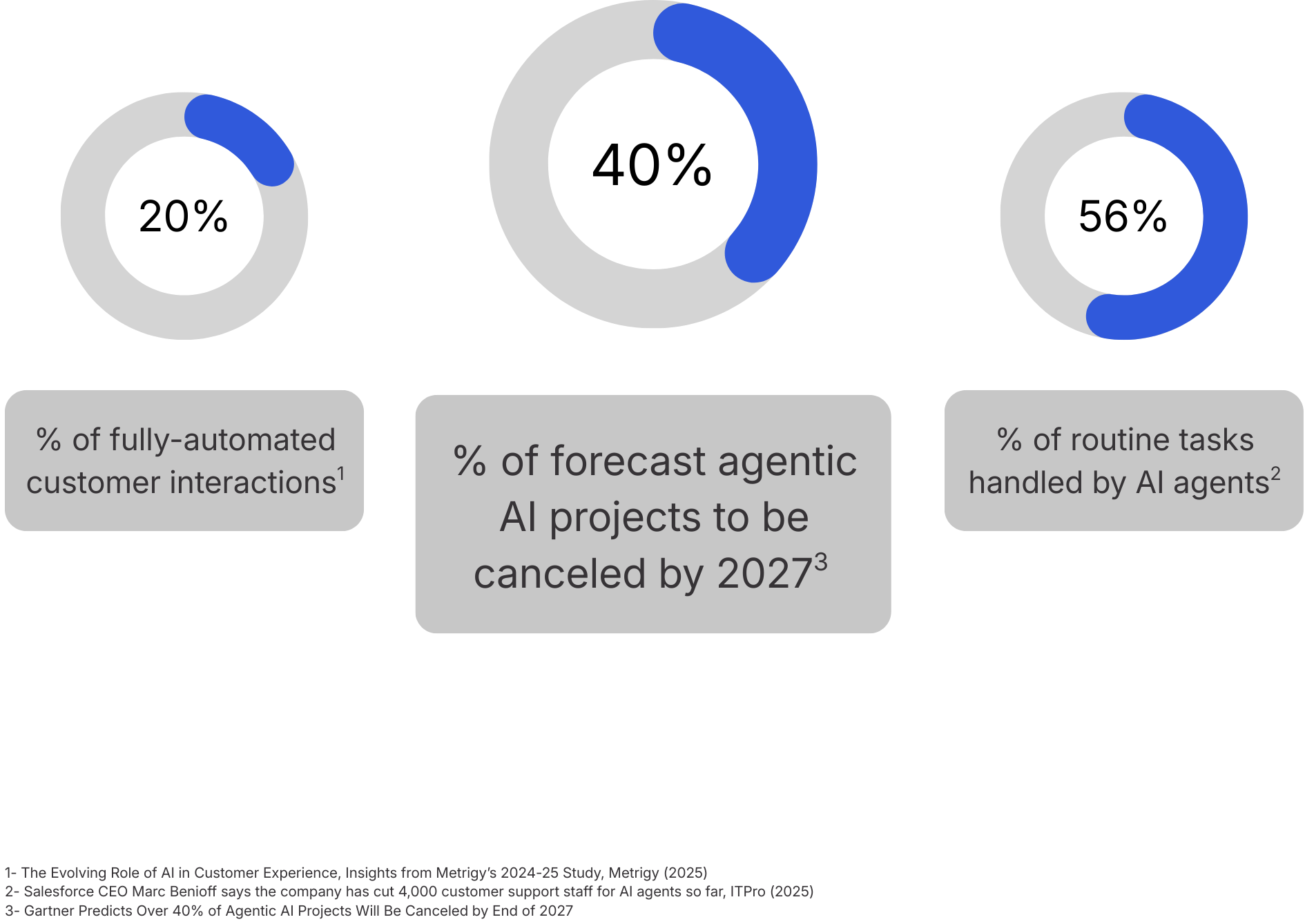

Agentic AI now behaves more like a junior employee than a script. AI is already fully automating around 20% of customer interactions for some organizations, with leaders expecting that share to rise.

In many small and mid-sized contact centers, executives report that AI agents now handle 30–60% of routine tasks, particularly in self-service and low-complexity workflows.

But adoption is still early, and the risk is real.

Gartner forecasts that more than 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, or weak risk controls.

In banking, the key question is not just ‘does this work’ but ‘can we explain it.

If AI starts making suggestions without the right data or consent, we can lose trust that took years to build.

That combination of high ambition, high failure risk, and growing complexity shapes the landscape for 2026.

Leaders now have to treat agentic AI as part of the workforce and the operating model, not as a set of point solutions, and they need to set clear expectations for where AI will act independently and where humans must remain in the loop.

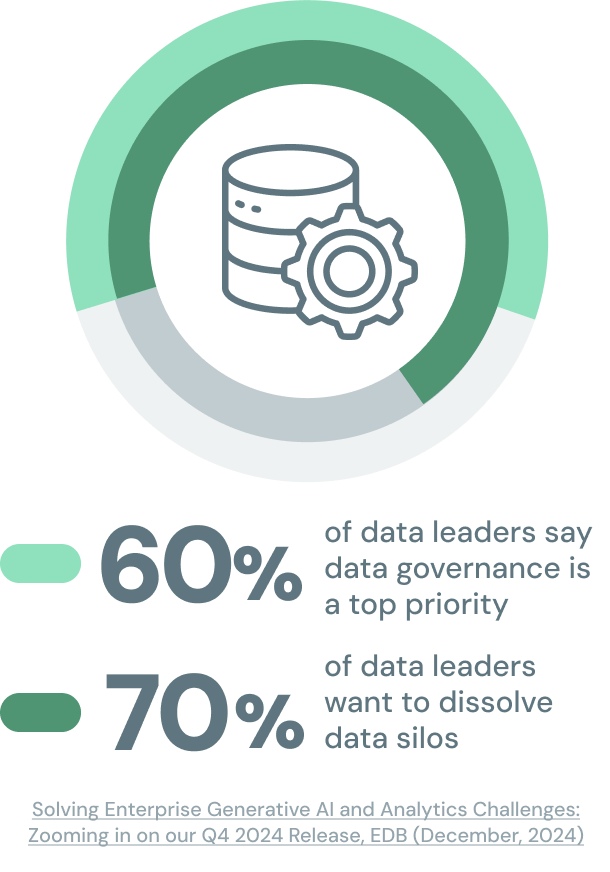

From “more data” to “ready data.”

Enterprises began shaping AI around their own data and infrastructure in 2025. For 60% of data leaders, data governance was the top priority last year, and 70% of organizations are actively looking to break down data silos and rein in data sprawl to power better BI and sustainable growth.

The AI winners in 2025 were those with “ready data”—modeled, contextual, and traceable enough for agents to act on. BI and analytics teams that once worked on quarterly reporting cycles suddenly faced real-time expectations from AI systems and executives.

AI and data are no longer just tools. They should be your sovereign assets in this new world.

Data governance decided the winners.

LLMs could sit on top of messy sources, but without canonical models, metadata, and governance, the result was wrong answers faster. In contrast, organizations that made AI and data sovereignty mission-critical achieved 5x higher ROI from AI than their peers.

Leaders shaped AI around the business.

Instead of transforming their business around opaque APIs, leaders began fine-tuning models on sovereign data, inside sovereign tenants, and pairing those models with vector stores and RAG on top of canonical schemas.

Until recently, businesses were reshaping themselves around AI. Now, you can finally shape AI around your business.

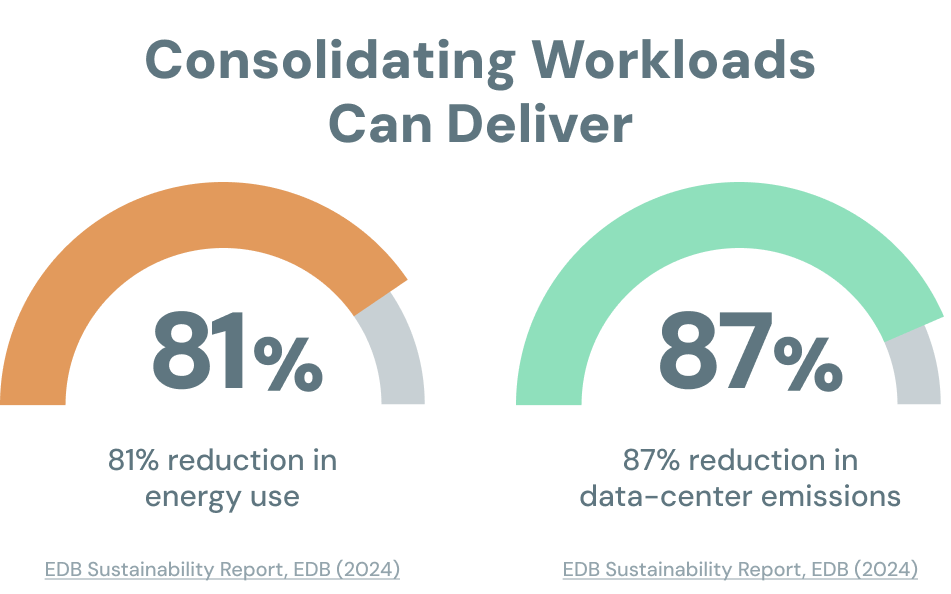

Architecture impacted cost and ESG.

Meanwhile, architecture choices drive both cost and ESG outcomes. For some enterprises, consolidating workloads onto EDB Postgres AI has delivered up to 81% reductions in energy use and 87% reductions in data-center emissions.

AI Data Leaders Across Industries

How sovereign platforms, agent governance, and human-first execution manifested across major industries.

Healthcare

Leaders in healthcare are using AI to sharpen “the system around the visit”—diagnostics, workflows, and patient experience—without displacing clinicians. They treat imaging, telemetry, and patient histories as sovereign assets, then layer agents on top for tasks like summarizing notes, drafting discharge plans, and surfacing prior guidance, always with humans in the loop.

On the patient side, they design journeys where context and preferences follow the person across channels, within strict privacy and consent boundaries.

Healthcare is 18% of US GDP. The question isn’t whether AI will change that curve, but how fast we can integrate it into the system without breaking trust.

In a world where technology and data are the two great equalizers, if you have to differentiate your company versus others, you have to have a brand that stands for something.

Financial Services

In financial services, AI sits at the intersection of risk, regulation, and customer trust. Banks and insurers are adopting sovereign and hybrid data platforms, then deploying fleets of agents across fraud, risk, and customer operations—with strict auditability and human sign-off for high-stakes decisions.

On the CX side, copilots support agents with real-time guidance and personalized offers; on the strategy side, leaders link AI to brand and narrative, knowing that models and data are quickly commoditized. Differentiation comes from what the brand stands for and how clearly it can explain AI-supported decisions to customers, boards, and regulators.

Manufacturing

Manufacturers treat AI as an extension of operational excellence, not a shortcut. Before deploying agents for maintenance, quality, or scheduling, leaders build canonical data models of machines, batches, and events, and invest in pipelines that keep these models clean and current.

Hybrid and edge patterns are now standard: real-time inference near the line, heavier analytics and retraining in sovereign or hybrid clouds. Agents watch for anomalies, recommend actions, and draft investigations, but humans still make the call when safety, uptime, or scrap are on the line.

You can’t just dump messy plant data into an LLM and call it Industry 4.0.

Think of universities as extensions of your R&D department.

Higher Education

Universities are treating AI as both curriculum change and operating leverage: building shared data platforms for research and student success, while deploying agents for advising, enrollment, and admin work. The most effective institutions don’t try to “AI-wash” everything—they pair academic governance with IT to set clear rules for GenAI in teaching, assessment, and services.

At the same time, they position themselves as applied AI partners for local industry, especially in manufacturing and engineering, using projects and labs to solve real sovereign data, edge, and automation problems.

Public Sector

Public-sector AI leaders are combining national-scale infrastructure with “people-first AI.” Governments are investing in sovereign and hybrid platforms so citizen data and critical systems remain under their legal control, while using agents to improve services: smarter routing of inquiries, copilots for caseworkers, and assistants that speed legal or benefits decisions.

Rather than reducing headcount, the best examples amplify civil servants with training and tools, and use AI for public health and climate-health integration where equity and transparency expectations are highest.

If we deploy AI in public health—prevention, primary care, climate-health—we can scale evidence across real populations.

In pharma, you don’t test on patients. You can’t let a model self-update in production just because it’s ‘learning.’ You monitor performance on the test side, then a human decides when to promote the new model.

Biopharma & Life Sciences

In life sciences, AI is embedded across the pipeline—from discovery and trial design to safety and manufacturing—but always under tight governance. Leaders treat genomic, trial, and IP data as highly sovereign, bring models to the data, and use agents as specialist colleagues to check submissions against regulations, draft responses, and scan documents for risk.

Crucially, they separate test and production: models are monitored and refined in controlled environments, and only promoted when humans are satisfied that performance and risk are acceptable.

The Next AI & Data Horizon

By the end of 2025, the most significant questions about AI and data will no longer b technical. The real divide now is maturity: whether you’ve built sovereign foundations, can actually see and govern your growing fleet of agents, and have redesigned work so humans are amplified. That arc is exactly what EDB’s experts also anticipated–this report simply localizes it in the lived reality of AI data leaders.

From here, progress will come from making a short, concrete roadmap against those three dimensions: hardening your sovereign core, standing up an agent governance stack, and investing in human-first operating models.

Organizations that treat those as one integrated system will be the ones that turn AI from a collection of pilots into a durable advantage. Together, sovereign foundations, governed agent fleets, and human-first execution form the operating system for AI-driven enterprises from 2026 and beyond.

EDB Postgres AI Delivers

4x

faster vector queries

18x

greater storage efficiency

68%

faster AI deployment

Solving the Vector Database Dilemma: One Platform, 4x Performance, 68% Faster AI Deployment, EDB (November, 2025)

Signals from the Field

Methodology

This AIDP editorial report draws on a mix of qualitative and quantitative inputs to capture how AI is changing modern data platforms, and what “AI-ready” looks like in practice for enterprise teams.

First, we synthesized quantitative signals including published market and benchmark research on AI adoption, data growth, cloud and infrastructure spend, and operational constraints such as latency, reliability, and power. We used these sources to ground the report’s claims about where AI workloads are expanding fastest, why platform complexity is rising, and which architecture decisions (data locality, portability, observability, security controls) are becoming non-negotiable.

Second, we conducted interviews with data and infrastructure leaders and practitioners shaping AI-enabled platforms inside real organizations. These conversations covered how teams define a “sovereign core,” how they decide what stays controlled versus what can move, how they govern model and agent workloads, and how they measure progress beyond “we shipped a pilot.” Interviewees included CIO- and CTO-level leaders, heads of data and AI, platform and database architects, security and risk stakeholders, and operators responsible for reliability and cost.

Finally, the editorial team compared patterns across the research inputs and interview transcripts to identify where AI data platforms are holding up, where they break, and what leading teams do differently. We translated those recurring patterns into the report’s practical guidance, including decision frameworks for workload placement, operating-model guardrails for governance and change control, and metrics that help leaders prove outcomes while reducing platform risk.

The result is a grounded snapshot of AI data platform strategy in 2025–2026 and a clear methodology for turning scattered AI initiatives into an architecture and operating model that can scale.